At COMPARE.EDU.VN, we understand the challenges in data analysis. What Does Anova Compare? ANOVA, or Analysis of Variance, compares the means of two or more groups to determine if there’s a statistically significant difference between them, offering a robust alternative to multiple t-tests. This guide dives deep into ANOVA, its applications, and how it helps in making informed decisions. Explore variance analysis, group mean comparison, and statistical significance right here.

1. Understanding the Basics of ANOVA

1.1. The Purpose of ANOVA

ANOVA, or Analysis of Variance, is a statistical test used to analyze the differences between the means of two or more groups. It’s a powerful tool when you want to determine if there’s a significant difference between the average values of different populations or treatments. Unlike t-tests, which are limited to comparing two groups, ANOVA can handle multiple groups simultaneously. This makes it particularly useful in experiments or studies where you have several different conditions or treatments being compared. The main goal of ANOVA is to determine if the variability between the group means is larger than what you would expect by chance. If the variability is large enough, it suggests that there is a real difference between the groups.

1.2. ANOVA vs. T-tests

While both ANOVA and t-tests are used to compare means, they differ in their applicability. A t-test is suitable for comparing the means of only two groups. If you have more than two groups, using multiple t-tests can inflate the Type I error rate, also known as the false positive rate. This is because each t-test has a certain probability of incorrectly rejecting the null hypothesis (i.e., concluding there is a significant difference when there isn’t one). When you perform multiple t-tests, these probabilities add up, increasing the overall chance of making a false positive conclusion. ANOVA, on the other hand, is designed to handle multiple groups without inflating the Type I error rate. It does this by analyzing the variance within each group and comparing it to the variance between the groups. This allows ANOVA to determine if there is an overall significant difference between the groups, without the increased risk of false positives associated with multiple t-tests.

1.3. Core Components of ANOVA

ANOVA relies on several key components to analyze the differences between group means:

- Total Variance: This is the overall variability in the dataset. It represents the sum of all the differences between each individual data point and the overall mean of the entire dataset.

- Between-Group Variance: This measures the variability between the means of the different groups. It represents how much the group means differ from each other.

- Within-Group Variance: This measures the variability within each group. It represents how much the individual data points within each group differ from their respective group mean.

ANOVA works by partitioning the total variance into these two components: between-group variance and within-group variance. By comparing these two variances, ANOVA can determine if the differences between the group means are statistically significant. If the between-group variance is significantly larger than the within-group variance, it suggests that there is a real difference between the group means.

2. The Underlying Principles of ANOVA

2.1. Null and Alternative Hypotheses

In ANOVA, the null hypothesis (H0) states that there is no significant difference between the means of the groups being compared. In other words, it assumes that all the group means are equal. The alternative hypothesis (Ha) states that there is a significant difference between at least two of the group means. It doesn’t specify which groups are different, only that at least one group mean is different from the others. ANOVA tests the null hypothesis by comparing the variability between the group means to the variability within the groups. If the variability between the group means is large enough, the null hypothesis is rejected, and it is concluded that there is a significant difference between at least two of the group means.

2.2. Understanding Sum of Squares

The sum of squares (SS) is a fundamental concept in ANOVA. It measures the total variability in the data by summing the squared differences between each data point and a reference point, such as the mean. In ANOVA, there are three types of sum of squares:

- Sum of Squares Total (SST): This measures the total variability in the dataset. It is calculated by summing the squared differences between each individual data point and the overall mean of the entire dataset.

- Sum of Squares Between (SSB): This measures the variability between the means of the different groups. It is calculated by summing the squared differences between each group mean and the overall mean, weighted by the number of data points in each group.

- Sum of Squares Within (SSW): This measures the variability within each group. It is calculated by summing the squared differences between each individual data point and its respective group mean.

The relationship between these three types of sum of squares is: SST = SSB + SSW. This equation shows how the total variability in the data is partitioned into the variability between the groups and the variability within the groups.

2.3. Degrees of Freedom in ANOVA

Degrees of freedom (df) represent the number of independent pieces of information available to estimate a parameter. In ANOVA, there are different degrees of freedom associated with each source of variation:

- Degrees of Freedom Total (dfT): This is the total number of data points minus 1 (N – 1), where N is the total number of data points in the dataset.

- Degrees of Freedom Between (dfB): This is the number of groups minus 1 (k – 1), where k is the number of groups being compared.

- Degrees of Freedom Within (dfW): This is the total number of data points minus the number of groups (N – k).

The degrees of freedom are used to calculate the mean squares, which are the sum of squares divided by their respective degrees of freedom. The mean squares are used to calculate the F-statistic, which is the test statistic used in ANOVA.

2.4. The F-Statistic Explained

The F-statistic is the test statistic used in ANOVA. It is calculated by dividing the mean square between (MSB) by the mean square within (MSW): F = MSB / MSW. The F-statistic represents the ratio of the variability between the group means to the variability within the groups. A large F-statistic indicates that the variability between the group means is large compared to the variability within the groups, suggesting that there is a significant difference between the group means. The F-statistic follows an F-distribution, which is a probability distribution that depends on the degrees of freedom between and within. The p-value associated with the F-statistic is the probability of observing an F-statistic as large as, or larger than, the one calculated from the data, assuming that the null hypothesis is true. If the p-value is less than the significance level (usually 0.05), the null hypothesis is rejected, and it is concluded that there is a significant difference between at least two of the group means.

3. Types of ANOVA

3.1. One-Way ANOVA

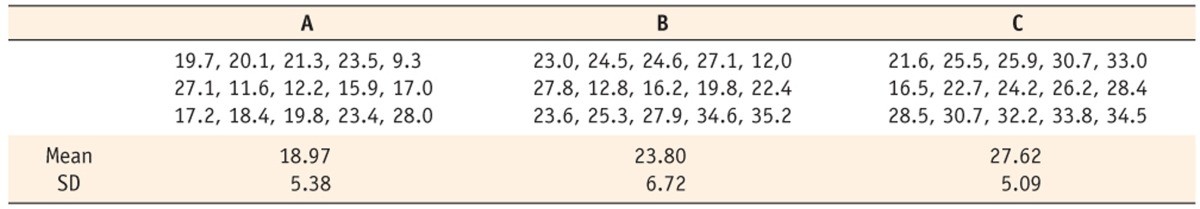

One-way ANOVA is used when you have one independent variable (factor) with two or more levels (groups) and one dependent variable. The goal is to determine if there is a significant difference between the means of the groups. For example, you might use one-way ANOVA to compare the test scores of students who were taught using three different teaching methods. In this case, the independent variable is the teaching method (with three levels: method A, method B, and method C), and the dependent variable is the test score. One-way ANOVA tests the null hypothesis that the means of the groups are equal. If the null hypothesis is rejected, it means that there is a significant difference between at least two of the group means. However, one-way ANOVA does not tell you which specific groups are different from each other. To determine which groups are different, you need to perform post-hoc tests, such as Tukey’s HSD or Bonferroni correction.

3.2. Two-Way ANOVA

Two-way ANOVA is used when you have two independent variables (factors) and one dependent variable. The goal is to determine if there is a significant effect of each independent variable on the dependent variable, as well as if there is a significant interaction effect between the two independent variables. An interaction effect occurs when the effect of one independent variable on the dependent variable depends on the level of the other independent variable. For example, you might use two-way ANOVA to study the effect of two different types of fertilizer (A and B) and two different watering schedules (daily and weekly) on the growth of plants. In this case, the independent variables are the type of fertilizer (with two levels: A and B) and the watering schedule (with two levels: daily and weekly), and the dependent variable is the plant growth. Two-way ANOVA tests three null hypotheses:

- There is no significant effect of the first independent variable on the dependent variable.

- There is no significant effect of the second independent variable on the dependent variable.

- There is no significant interaction effect between the two independent variables.

If any of these null hypotheses are rejected, it means that there is a significant effect of the corresponding independent variable or interaction effect on the dependent variable.

3.3. Repeated Measures ANOVA

Repeated measures ANOVA is used when you have one independent variable (factor) and one dependent variable, and the same subjects are measured multiple times under different conditions or at different time points. This type of ANOVA is used when you want to determine if there is a significant change in the dependent variable over time or under different conditions. For example, you might use repeated measures ANOVA to study the effect of a new drug on blood pressure. In this case, the independent variable is the time point (e.g., before treatment, after 1 week of treatment, after 2 weeks of treatment), and the dependent variable is the blood pressure. The same subjects are measured at each time point. Repeated measures ANOVA tests the null hypothesis that there is no significant change in the dependent variable over time or under different conditions. Repeated measures ANOVA is different from one-way ANOVA because it takes into account the correlation between the repeated measurements on the same subjects. This makes it more powerful than one-way ANOVA when analyzing repeated measures data.

3.4. MANOVA (Multivariate Analysis of Variance)

MANOVA is used when you have two or more independent variables (factors) and two or more dependent variables. The goal is to determine if there is a significant effect of the independent variables on the set of dependent variables, taking into account the correlations between the dependent variables. MANOVA is an extension of ANOVA to the multivariate case, where there are multiple dependent variables. For example, you might use MANOVA to study the effect of two different types of exercise (aerobic and strength training) on both blood pressure and cholesterol levels. In this case, the independent variable is the type of exercise (with two levels: aerobic and strength training), and the dependent variables are blood pressure and cholesterol levels. MANOVA tests the null hypothesis that there is no significant effect of the independent variables on the set of dependent variables. MANOVA takes into account the correlations between the dependent variables, which can increase the power of the test compared to performing separate ANOVAs on each dependent variable.

ANOVA statistic components represented by a pie chart

ANOVA statistic components represented by a pie chart

4. Assumptions of ANOVA

4.1. Normality

The assumption of normality in ANOVA states that the data within each group should be approximately normally distributed. This means that the distribution of the data should be roughly symmetrical and bell-shaped. Normality is important because ANOVA relies on the assumption that the data comes from a normal distribution to accurately calculate the F-statistic and p-value. If the data is not normally distributed, the results of the ANOVA may be unreliable. There are several ways to check for normality, including:

- Histograms: Create a histogram of the data for each group. If the histogram is approximately symmetrical and bell-shaped, the data is likely normally distributed.

- Q-Q Plots: Create a Q-Q plot of the data for each group. If the data points fall close to the diagonal line, the data is likely normally distributed.

- Shapiro-Wilk Test: Perform a Shapiro-Wilk test for each group. This test assesses whether the data is significantly different from a normal distribution. A p-value greater than 0.05 suggests that the data is not significantly different from a normal distribution.

If the data is not normally distributed, there are several options:

- Transform the data: Apply a mathematical transformation to the data, such as a logarithmic or square root transformation, to make it more normally distributed.

- Use a non-parametric test: Use a non-parametric test, such as the Kruskal-Wallis test, which does not assume normality.

- Use a robust ANOVA: Use a robust ANOVA method, which is less sensitive to violations of the normality assumption.

4.2. Homogeneity of Variance

The assumption of homogeneity of variance in ANOVA states that the variances of the data within each group should be approximately equal. This means that the spread of the data should be similar across all groups. Homogeneity of variance is important because ANOVA relies on the assumption that the variances are equal to accurately calculate the F-statistic and p-value. If the variances are not equal, the results of the ANOVA may be unreliable. There are several ways to check for homogeneity of variance, including:

- Levene’s Test: Perform Levene’s test. This test assesses whether the variances of the groups are significantly different. A p-value greater than 0.05 suggests that the variances are not significantly different.

- Bartlett’s Test: Perform Bartlett’s test. This test is more sensitive to departures from normality than Levene’s test, so it should only be used if the data is approximately normally distributed. A p-value greater than 0.05 suggests that the variances are not significantly different.

- Visual Inspection: Create boxplots of the data for each group. If the boxes have approximately the same size, the variances are likely equal.

If the variances are not equal, there are several options:

- Transform the data: Apply a mathematical transformation to the data, such as a logarithmic or square root transformation, to make the variances more equal.

- Use Welch’s ANOVA: Use Welch’s ANOVA, which is a modification of ANOVA that does not assume equal variances.

- Use a non-parametric test: Use a non-parametric test, such as the Kruskal-Wallis test, which does not assume equal variances.

4.3. Independence of Observations

The assumption of independence of observations in ANOVA states that the data points within each group should be independent of each other. This means that the value of one data point should not be influenced by the value of any other data point. Independence of observations is important because ANOVA relies on the assumption that the data points are independent to accurately calculate the F-statistic and p-value. If the data points are not independent, the results of the ANOVA may be unreliable. There are several ways to check for independence of observations:

- Random Sampling: Ensure that the data was collected using a random sampling method. This helps to ensure that the data points are independent of each other.

- Study Design: Ensure that the study design does not introduce any dependence between the data points. For example, if the same subjects are measured multiple times, the data points may not be independent.

If the data points are not independent, there are several options:

- Use a repeated measures ANOVA: Use a repeated measures ANOVA, which is designed for data where the same subjects are measured multiple times.

- Use a mixed-effects model: Use a mixed-effects model, which can handle data with both fixed and random effects.

5. Conducting an ANOVA Test

5.1. Setting Up the Data

Before conducting an ANOVA test, it is important to properly set up the data. This involves organizing the data into a format that can be easily analyzed by statistical software. The data should be organized into columns, with each column representing a different variable. One column should represent the independent variable (grouping variable), and another column should represent the dependent variable. The independent variable should be coded numerically, with each number representing a different group. For example, if you are comparing three groups, you could code the groups as 1, 2, and 3. The dependent variable should be measured on a continuous scale.

5.2. Running ANOVA in Statistical Software

ANOVA can be run using a variety of statistical software packages, such as SPSS, R, and Python. The specific steps for running ANOVA will vary depending on the software package being used, but the general process is the same. First, you need to import the data into the software. Then, you need to specify the independent and dependent variables. Finally, you need to run the ANOVA test. The software will then output the results of the ANOVA test, including the F-statistic, p-value, and degrees of freedom.

5.3. Interpreting the Results

The results of the ANOVA test should be interpreted carefully. The most important result is the p-value. If the p-value is less than the significance level (usually 0.05), the null hypothesis is rejected, and it is concluded that there is a significant difference between at least two of the group means. However, ANOVA does not tell you which specific groups are different from each other. To determine which groups are different, you need to perform post-hoc tests.

5.4. Post-Hoc Tests

Post-hoc tests are used to determine which specific groups are different from each other after ANOVA has shown that there is a significant difference between at least two of the group means. There are a variety of post-hoc tests available, each with its own strengths and weaknesses. Some common post-hoc tests include:

- Tukey’s HSD: This test is a good choice when you want to compare all possible pairs of groups.

- Bonferroni Correction: This test is a more conservative test than Tukey’s HSD, and it is a good choice when you have a small number of groups.

- Scheffe’s Test: This test is the most conservative test, and it is a good choice when you have a large number of groups.

The choice of which post-hoc test to use depends on the specific research question and the characteristics of the data.

6. Real-World Applications of ANOVA

6.1. Medical Research

In medical research, ANOVA is frequently used to compare the effectiveness of different treatments or interventions. For example, researchers might use ANOVA to compare the effectiveness of three different drugs in treating a particular condition. The independent variable would be the type of drug (with three levels: drug A, drug B, and drug C), and the dependent variable would be a measure of the condition, such as symptom severity or survival rate. ANOVA can help researchers determine if there is a significant difference in the effectiveness of the different drugs.

6.2. Business and Marketing

In business and marketing, ANOVA is used to analyze the impact of different marketing strategies or product features on customer behavior. For example, a company might use ANOVA to compare the sales of a product in three different markets, each using a different marketing campaign. The independent variable would be the marketing campaign (with three levels: campaign A, campaign B, and campaign C), and the dependent variable would be the sales of the product. ANOVA can help the company determine if there is a significant difference in the effectiveness of the different marketing campaigns.

6.3. Education

In education, ANOVA is used to evaluate the effectiveness of different teaching methods or educational programs. For example, researchers might use ANOVA to compare the test scores of students who were taught using two different teaching methods. The independent variable would be the teaching method (with two levels: method A and method B), and the dependent variable would be the test score. ANOVA can help researchers determine if there is a significant difference in the effectiveness of the different teaching methods.

6.4. Agriculture

In agriculture, ANOVA is used to compare the yields of different crops under different growing conditions. For example, researchers might use ANOVA to compare the yields of three different varieties of wheat grown in the same field. The independent variable would be the variety of wheat (with three levels: variety A, variety B, and variety C), and the dependent variable would be the yield of wheat. ANOVA can help researchers determine if there is a significant difference in the yields of the different varieties of wheat.

7. Advantages and Disadvantages of ANOVA

7.1. Benefits of Using ANOVA

- Handles Multiple Groups: ANOVA can compare the means of two or more groups, unlike t-tests which are limited to two groups.

- Controls Type I Error Rate: ANOVA controls the Type I error rate (false positive rate) when comparing multiple groups, unlike performing multiple t-tests which can inflate the Type I error rate.

- Provides a Global Test: ANOVA provides a global test of whether there is a significant difference between any of the group means.

- Can Analyze Complex Designs: ANOVA can be used to analyze complex experimental designs, such as factorial designs with multiple independent variables.

7.2. Limitations of ANOVA

- Assumptions: ANOVA relies on several assumptions, such as normality, homogeneity of variance, and independence of observations. If these assumptions are violated, the results of the ANOVA may be unreliable.

- Does Not Identify Specific Differences: ANOVA does not tell you which specific groups are different from each other. To determine which groups are different, you need to perform post-hoc tests.

- Can Be Sensitive to Outliers: ANOVA can be sensitive to outliers, which are data points that are far away from the other data points. Outliers can have a large impact on the results of the ANOVA.

8. How COMPARE.EDU.VN Simplifies Comparisons

8.1. Objective Comparisons

At COMPARE.EDU.VN, we understand the importance of objective comparisons. That’s why we provide detailed and unbiased analyses of various products, services, and ideas. Our goal is to help you make informed decisions by presenting the facts in a clear and concise manner. We eliminate the guesswork and provide you with the information you need to choose the best option for your needs.

8.2. Detailed Analysis

We go beyond surface-level comparisons and delve into the details that matter. Our team of experts conducts thorough research and analysis to provide you with a comprehensive understanding of each option. We compare features, specifications, prices, and other important factors to help you make the right choice.

8.3. User Reviews and Expert Opinions

COMPARE.EDU.VN includes reviews from users and insights from industry experts. This allows you to see what others are saying about the products or services you’re considering. User reviews provide real-world feedback, while expert opinions offer valuable insights from professionals in the field.

9. ANOVA in Different Fields

9.1. Healthcare Sector

ANOVA is extensively used in healthcare to compare the effectiveness of different treatments, drugs, and therapies. Researchers and healthcare professionals rely on ANOVA to analyze data from clinical trials and studies, ensuring that medical decisions are based on solid statistical evidence. For example, ANOVA can be used to compare the recovery times of patients undergoing different surgical procedures or the effectiveness of different medications in treating a specific disease.

9.2. Financial Analysis

In finance, ANOVA can be used to compare the performance of different investment strategies or portfolios. Financial analysts use ANOVA to analyze data from stock markets and other financial instruments, helping investors make informed decisions about where to allocate their capital. For example, ANOVA can be used to compare the returns of different mutual funds or the performance of different asset classes over a specific period.

9.3. Technological Advancements

ANOVA plays a vital role in technology by comparing the performance of different algorithms, software, and hardware. Engineers and developers use ANOVA to analyze data from experiments and simulations, ensuring that technological advancements are based on sound statistical principles. For example, ANOVA can be used to compare the speed and accuracy of different machine learning algorithms or the performance of different computer processors.

10. Practical Examples of ANOVA

10.1. Comparing Student Performance

Imagine a school district wants to evaluate the effectiveness of three different teaching methods on student performance. They divide students into three groups, each taught using a different method. At the end of the semester, they administer a standardized test to all students. ANOVA can be used to compare the test scores of the three groups, determining if there is a significant difference in performance between the teaching methods.

10.2. Analyzing Marketing Campaigns

A marketing team launches three different advertising campaigns to promote a new product. They track the sales generated by each campaign over a specific period. ANOVA can be used to compare the sales figures of the three campaigns, determining if there is a significant difference in the effectiveness of the campaigns.

10.3. Evaluating Drug Efficacy

A pharmaceutical company conducts a clinical trial to evaluate the efficacy of a new drug in treating a specific condition. They divide patients into three groups: one group receives the new drug, one group receives a standard treatment, and one group receives a placebo. They measure the severity of the condition in each patient after a specific period. ANOVA can be used to compare the severity scores of the three groups, determining if the new drug is significantly more effective than the standard treatment or placebo.

11. Troubleshooting Common Issues in ANOVA

11.1. Addressing Non-Normality

If the data violates the assumption of normality, there are several options:

- Transform the data: Apply a mathematical transformation to the data, such as a logarithmic or square root transformation, to make it more normally distributed.

- Use a non-parametric test: Use a non-parametric test, such as the Kruskal-Wallis test, which does not assume normality.

- Use a robust ANOVA: Use a robust ANOVA method, which is less sensitive to violations of the normality assumption.

11.2. Handling Unequal Variances

If the data violates the assumption of homogeneity of variance, there are several options:

- Transform the data: Apply a mathematical transformation to the data, such as a logarithmic or square root transformation, to make the variances more equal.

- Use Welch’s ANOVA: Use Welch’s ANOVA, which is a modification of ANOVA that does not assume equal variances.

- Use a non-parametric test: Use a non-parametric test, such as the Kruskal-Wallis test, which does not assume equal variances.

11.3. Dealing with Outliers

Outliers can have a large impact on the results of ANOVA. There are several ways to deal with outliers:

- Remove the outliers: Remove the outliers from the data. However, this should only be done if there is a valid reason to believe that the outliers are not representative of the population.

- Transform the data: Apply a mathematical transformation to the data, such as a logarithmic or square root transformation, to reduce the impact of the outliers.

- Use a robust ANOVA: Use a robust ANOVA method, which is less sensitive to outliers.

12. Beyond the Basics: Advanced ANOVA Techniques

12.1. ANCOVA (Analysis of Covariance)

ANCOVA is an extension of ANOVA that includes one or more continuous variables (covariates) that may influence the dependent variable. ANCOVA is used to control for the effects of these covariates, allowing for a more precise comparison of the group means.

12.2. Mixed-Effects ANOVA

Mixed-effects ANOVA is used when the data includes both fixed and random effects. Fixed effects are variables that are of direct interest, while random effects are variables that are not of direct interest but may influence the dependent variable.

12.3. Bayesian ANOVA

Bayesian ANOVA is a Bayesian approach to ANOVA that provides a more flexible and informative analysis than traditional ANOVA. Bayesian ANOVA allows for the incorporation of prior knowledge and provides posterior distributions for the parameters of interest.

13. Future Trends in ANOVA

13.1. Integration with Machine Learning

In the future, ANOVA is likely to be increasingly integrated with machine learning techniques. This integration will allow for more sophisticated analysis of complex data and the development of more accurate predictive models.

13.2. Automation of ANOVA Procedures

As statistical software becomes more user-friendly, ANOVA procedures are likely to become more automated. This automation will make ANOVA more accessible to a wider range of users and will reduce the risk of errors in the analysis.

13.3. Expansion of ANOVA Applications

As the amount of data continues to grow, ANOVA is likely to be applied to an ever-increasing range of applications. This expansion will require the development of new ANOVA techniques and methods to address the challenges of analyzing complex and large datasets.

14. Conclusion: Making Informed Decisions with ANOVA and COMPARE.EDU.VN

ANOVA is a powerful statistical tool that enables the comparison of means across multiple groups, offering valuable insights for decision-making in various fields. At COMPARE.EDU.VN, we aim to simplify complex comparisons, providing you with objective, detailed, and user-centric analyses. Whether you’re evaluating medical treatments, marketing strategies, or educational programs, understanding ANOVA can help you make more informed choices.

Ready to make smarter decisions? Visit COMPARE.EDU.VN today for comprehensive comparisons and expert analyses. Our detailed evaluations can guide you through the decision-making process, ensuring you choose the best option for your specific needs. Contact us at 333 Comparison Plaza, Choice City, CA 90210, United States. Call or message via Whatsapp at +1 (626) 555-9090. Start making informed decisions with compare.edu.vn now.

15. Frequently Asked Questions (FAQs) About ANOVA

-

What is ANOVA?

- ANOVA stands for Analysis of Variance. It is a statistical test used to compare the means of two or more groups to determine if there is a statistically significant difference between them.

-

When should I use ANOVA instead of a t-test?

- Use ANOVA when you want to compare the means of three or more groups. A t-test is only suitable for comparing the means of two groups.

-

What are the assumptions of ANOVA?

- The main assumptions of ANOVA are normality, homogeneity of variance, and independence of observations.

-

What is the null hypothesis in ANOVA?

- The null hypothesis in ANOVA is that there is no significant difference between the means of the groups being compared.

-

What is the alternative hypothesis in ANOVA?

- The alternative hypothesis in ANOVA is that there is a significant difference between at least two of the group means.

-

What is the F-statistic?

- The F-statistic is the test statistic used in ANOVA. It is calculated by dividing the mean square between (MSB) by the mean square within (MSW).

-

What is a p-value?

- A p-value is the probability of observing a test statistic as extreme as, or more extreme than, the one calculated from the data, assuming that the null hypothesis is true.

-

What does a significant p-value mean in ANOVA?

- A significant p-value (typically less than 0.05) means that the null hypothesis is rejected, and it is concluded that there is a significant difference between at least two of the group means.

-

What are post-hoc tests?

- Post-hoc tests are used to determine which specific groups are different from each other after ANOVA has shown that there is a significant difference between at least two of the group means.

-

What are some common post-hoc tests?

- Some common post-hoc tests include Tukey’s HSD, Bonferroni correction, and Scheffe’s test.