Does Anova Compare Means Or Variance? COMPARE.EDU.VN provides a detailed exploration into the Analysis of Variance (ANOVA) test, clarifying its function and demonstrating how it is used to compare multiple means by analyzing variance. This guide breaks down the complexities of ANOVA and how it helps researchers and analysts make informed decisions.

1. Understanding the Fundamentals of ANOVA

The Analysis of Variance, commonly known as ANOVA, is a statistical test used to analyze the differences between the means of two or more groups. While it might seem counterintuitive that a test called “Analysis of Variance” is used to compare means, the name stems from the fact that ANOVA assesses differences in means by analyzing the variance within and between groups. To fully grasp the concept, it’s essential to understand the core components and principles underlying ANOVA.

1.1. The Purpose of ANOVA

The primary purpose of ANOVA is to determine whether there are any statistically significant differences between the means of two or more independent groups. In simpler terms, ANOVA helps us understand if the observed differences between group means are likely due to a real effect or simply due to random chance.

1.2. How ANOVA Works: Analyzing Variance

ANOVA works by partitioning the total variance in a dataset into different sources of variation. These sources include:

-

Between-Group Variance: This measures the variation between the means of different groups. It reflects how much the group means differ from each other and from the overall mean.

-

Within-Group Variance: This measures the variation within each group. It represents the degree to which individual data points within each group vary from their respective group means. This is often attributed to random error or unexplained variability.

By comparing these variances, ANOVA can determine if the between-group variance is significantly larger than the within-group variance. If this is the case, it suggests that the group means are indeed different from each other.

1.3. Hypotheses in ANOVA

ANOVA tests the following hypotheses:

-

Null Hypothesis (H0): There is no significant difference between the means of the groups being compared. In other words, all group means are equal.

-

Alternative Hypothesis (H1): There is at least one significant difference between the means of the groups being compared. This does not necessarily mean that all group means are different, but rather that at least one group mean differs significantly from the others.

2. Key Assumptions of ANOVA

To ensure the validity of ANOVA results, several assumptions must be met. These assumptions are crucial for the reliability and accuracy of the analysis.

2.1. Independence of Observations

The observations within each group and between groups must be independent of each other. This means that the data points should not be influenced by or related to other data points in the dataset. This assumption is particularly important in experimental designs where participants are randomly assigned to different treatment groups.

2.2. Normality of Data

The data within each group should be approximately normally distributed. Normality can be assessed using various methods, such as histograms, Q-Q plots, or formal normality tests like the Shapiro-Wilk test or the Kolmogorov-Smirnov test. If the data significantly deviate from normality, transformations or non-parametric alternatives may be considered.

2.3. Homogeneity of Variance (Homoscedasticity)

The variances of the groups being compared should be approximately equal. This assumption, also known as homoscedasticity, can be assessed using tests like Levene’s test or Bartlett’s test. If the variances are significantly different, it may lead to inaccurate results. In such cases, adjustments like the Welch’s ANOVA or transformations may be necessary.

2.4. Data Measurement Level

ANOVA requires that the dependent variable is measured on an interval or ratio scale. This means that the data should have meaningful numerical values with equal intervals between them.

3. Types of ANOVA

There are several types of ANOVA, each suited for different experimental designs and research questions. The most common types include:

3.1. One-Way ANOVA

One-Way ANOVA is used when comparing the means of two or more independent groups on a single factor (independent variable). This is the most basic form of ANOVA and is suitable when you want to determine if there is a significant difference between the means of groups that differ in one characteristic.

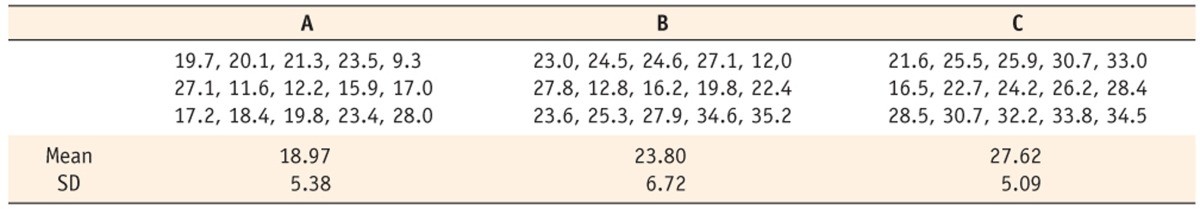

Example: A researcher wants to compare the effectiveness of three different teaching methods on student test scores. The independent variable is the teaching method (with three levels: Method A, Method B, and Method C), and the dependent variable is the test score.

3.2. Two-Way ANOVA

Two-Way ANOVA is used when examining the effects of two independent variables on a dependent variable. It allows researchers to assess not only the main effects of each independent variable but also the interaction effect between them.

Example: A marketing manager wants to study the impact of both advertising channel (online vs. print) and promotional offer (discount vs. no discount) on sales. The dependent variable is sales revenue.

3.3. Repeated Measures ANOVA

Repeated Measures ANOVA is used when the same subjects are measured under different conditions or at different points in time. This type of ANOVA is appropriate when you have related samples, such as when participants are measured multiple times.

Example: A psychologist wants to examine the effect of a new drug on anxiety levels. The same group of participants is measured for anxiety before the drug, during the drug, and after the drug.

3.4. Multivariate Analysis of Variance (MANOVA)

MANOVA is used when there are two or more dependent variables that are being analyzed simultaneously. It is an extension of ANOVA that allows researchers to examine the effects of independent variables on a combination of dependent variables.

Example: A researcher wants to study the impact of different exercise programs on both weight loss and cholesterol levels. The independent variable is the type of exercise program, and the dependent variables are weight loss and cholesterol levels.

4. Conducting ANOVA: A Step-by-Step Guide

Performing an ANOVA involves several steps, from data preparation to interpretation of results. Here’s a step-by-step guide:

4.1. Data Preparation

-

Collect Data: Gather the data for each group being compared.

-

Organize Data: Arrange the data in a structured format, such as a spreadsheet, with each group represented in a separate column.

-

Check Assumptions: Verify that the assumptions of ANOVA (independence, normality, and homogeneity of variance) are met. Use appropriate tests and visualizations to assess these assumptions.

4.2. Setting Up the ANOVA Test

-

Software: Use statistical software such as SPSS, R, SAS, or Python to perform the ANOVA.

-

Input Data: Enter the data into the software, specifying the independent and dependent variables.

-

Select ANOVA Type: Choose the appropriate type of ANOVA based on your experimental design (e.g., one-way, two-way, repeated measures).

4.3. Running the ANOVA

-

Execute the Test: Run the ANOVA test in the software.

-

Review Output: Examine the output generated by the software, which typically includes an ANOVA table.

4.4. Interpreting Results

-

F-Statistic: Look at the F-statistic, which is the ratio of between-group variance to within-group variance.

-

P-Value: Assess the p-value associated with the F-statistic. If the p-value is less than your chosen significance level (alpha, usually 0.05), you reject the null hypothesis.

-

Degrees of Freedom: Note the degrees of freedom for both the between-group and within-group variances.

-

Effect Size: Calculate an effect size measure, such as eta-squared (η²) or omega-squared (ω²), to quantify the proportion of variance in the dependent variable that is explained by the independent variable.

4.5. Post-Hoc Tests

-

Perform Post-Hoc Tests: If the ANOVA result is significant (i.e., you reject the null hypothesis), perform post-hoc tests to determine which specific groups differ significantly from each other. Common post-hoc tests include Tukey’s HSD, Bonferroni, Scheffé, and Dunnett’s test.

-

Interpret Post-Hoc Results: Analyze the results of the post-hoc tests to identify which pairs of group means are significantly different.

5. Understanding the ANOVA Table

The ANOVA table is a crucial component of the ANOVA output. It provides a summary of the analysis, including the sources of variation, degrees of freedom, sums of squares, mean squares, F-statistic, and p-value.

5.1. Components of the ANOVA Table

-

Source of Variation: This column lists the different sources of variation in the data, including between-groups (factor), within-groups (error), and total.

-

Degrees of Freedom (df): This indicates the number of independent pieces of information used to calculate the variance. For between-groups, df = k – 1 (where k is the number of groups). For within-groups, df = N – k (where N is the total number of observations).

-

Sum of Squares (SS): This represents the total variability associated with each source of variation. It is the sum of the squared deviations from the mean.

-

Mean Square (MS): This is the estimate of variance for each source of variation. It is calculated by dividing the sum of squares by the degrees of freedom (MS = SS / df).

-

F-Statistic: This is the test statistic used to determine if there is a significant difference between the group means. It is calculated by dividing the mean square between-groups by the mean square within-groups (F = MSB / MSW).

-

P-Value: This is the probability of observing the obtained F-statistic (or a more extreme value) if the null hypothesis is true. A small p-value (typically less than 0.05) indicates strong evidence against the null hypothesis.

5.2. Interpreting the ANOVA Table

To interpret the ANOVA table, focus on the F-statistic and the p-value. If the p-value is less than the chosen significance level (alpha), you reject the null hypothesis, indicating that there is a statistically significant difference between the group means. The F-statistic provides a measure of the strength of this difference.

6. Post-Hoc Tests: Determining Which Groups Differ

If the ANOVA result is significant, it indicates that there is at least one significant difference between the group means. However, it does not tell you which specific groups differ from each other. To determine this, you need to perform post-hoc tests.

6.1. Common Post-Hoc Tests

-

Tukey’s HSD (Honestly Significant Difference): This test is widely used and provides a good balance between controlling Type I and Type II errors. It is appropriate when you have equal sample sizes and equal variances.

-

Bonferroni: This test is a conservative approach that controls the familywise error rate by dividing the significance level (alpha) by the number of comparisons. It is suitable when you have a small number of comparisons.

-

Scheffé: This test is the most conservative and is suitable for complex comparisons. It controls the familywise error rate for all possible comparisons.

-

Dunnett’s Test: This test is used when you want to compare multiple treatment groups to a single control group.

6.2. Interpreting Post-Hoc Results

The results of post-hoc tests will indicate which pairs of group means are significantly different from each other. Typically, these results are presented in the form of pairwise comparisons with associated p-values. If the p-value for a specific pairwise comparison is less than the chosen significance level (alpha), you conclude that there is a statistically significant difference between those two group means.

7. Reporting ANOVA Results

When reporting ANOVA results, it is important to provide enough information to allow readers to understand the analysis and its implications. Here’s a guideline for reporting ANOVA results:

7.1. Descriptive Statistics

Provide descriptive statistics for each group, including the mean, standard deviation, and sample size. This gives readers an overview of the data being analyzed.

7.2. ANOVA Table

Present the ANOVA table, including the sources of variation, degrees of freedom, sums of squares, mean squares, F-statistic, and p-value. This allows readers to assess the statistical significance of the results.

7.3. Post-Hoc Test Results

If post-hoc tests were performed, report the results of these tests, including the pairwise comparisons and associated p-values. This indicates which specific groups differ significantly from each other.

7.4. Effect Size

Report an effect size measure, such as eta-squared (η²) or omega-squared (ω²), to quantify the proportion of variance in the dependent variable that is explained by the independent variable. This provides a measure of the practical significance of the results.

7.5. Interpretation

Provide a clear and concise interpretation of the results, explaining the implications of the findings in the context of your research question. Discuss any limitations of the study and suggest directions for future research.

Example:

“A one-way ANOVA was conducted to compare the effect of three different teaching methods (A, B, and C) on student test scores. The results showed a statistically significant difference between the teaching methods, F(2, 57) = 8.52, p < 0.001, η² = 0.23. Post-hoc tests using Tukey’s HSD revealed that teaching method C resulted in significantly higher test scores compared to both teaching method A (p = 0.002) and teaching method B (p = 0.015). There was no significant difference between teaching methods A and B (p = 0.45). These findings suggest that teaching method C is more effective than the other two methods in improving student test scores.”

Distributions with the same between group variance. (a) smaller variance within groups; (b) larger variance within groups

Distributions with the same between group variance. (a) smaller variance within groups; (b) larger variance within groups

8. Practical Applications of ANOVA

ANOVA is a versatile statistical tool with applications across various fields. Here are some practical examples:

8.1. Healthcare

-

Comparing Drug Efficacy: Researchers use ANOVA to compare the effectiveness of different drugs in treating a particular condition. For example, they might compare the mean reduction in blood pressure among patients receiving different medications.

-

Evaluating Treatment Outcomes: ANOVA can be used to evaluate the outcomes of different treatment approaches. For instance, comparing the mean recovery time for patients undergoing different rehabilitation programs after surgery.

8.2. Education

-

Assessing Teaching Methods: Educators use ANOVA to compare the effectiveness of different teaching methods on student performance. For example, comparing the mean test scores of students taught using traditional lectures versus interactive simulations.

-

Comparing Educational Programs: ANOVA can be used to compare the outcomes of different educational programs. For instance, comparing the mean graduation rates of students enrolled in different types of vocational training programs.

8.3. Business and Marketing

-

Analyzing Advertising Effectiveness: Marketers use ANOVA to compare the effectiveness of different advertising campaigns. For example, comparing the mean sales revenue generated by different advertising channels (e.g., online ads, TV commercials, print ads).

-

Evaluating Customer Satisfaction: ANOVA can be used to compare customer satisfaction levels across different product lines or service offerings. For instance, comparing the mean satisfaction scores of customers using different versions of a software application.

8.4. Engineering

-

Comparing Product Designs: Engineers use ANOVA to compare the performance of different product designs. For example, comparing the mean tensile strength of different materials used in bridge construction.

-

Optimizing Manufacturing Processes: ANOVA can be used to optimize manufacturing processes by identifying the factors that significantly impact product quality. For instance, comparing the mean defect rates of products manufactured using different production settings.

9. Addressing Violations of ANOVA Assumptions

It’s not uncommon for real-world data to violate one or more of the assumptions of ANOVA. When this occurs, there are several strategies that can be used to address these violations and ensure the validity of the analysis.

9.1. Transformations

- Data Transformations: Applying mathematical transformations to the data can help to normalize the distribution and stabilize the variance. Common transformations include logarithmic, square root, and inverse transformations. The choice of transformation depends on the nature of the data and the specific violation.

9.2. Non-Parametric Alternatives

- Non-Parametric Tests: If the data significantly violate the assumptions of normality and homogeneity of variance, non-parametric alternatives to ANOVA can be used. These tests do not rely on strict distributional assumptions and are suitable for data that are not normally distributed or have unequal variances.

9.3. Welch’s ANOVA

- Welch’s ANOVA: This is a robust alternative to ANOVA that does not assume equal variances. It is appropriate when the variances of the groups being compared are significantly different. Welch’s ANOVA adjusts the degrees of freedom to account for the unequal variances, providing a more accurate test result.

9.4. Bootstrapping

- Bootstrapping: This is a resampling technique that can be used to estimate the sampling distribution of the test statistic without making strong distributional assumptions. Bootstrapping involves repeatedly sampling from the original data with replacement and calculating the test statistic for each resampled dataset. The resulting distribution of test statistics can be used to estimate p-values and confidence intervals.

10. ANOVA vs. t-Tests: When to Use Which

Both ANOVA and t-tests are used to compare means, but they are appropriate for different situations. Here’s a comparison of ANOVA and t-tests to help you decide when to use each test:

10.1. Number of Groups

-

t-Tests: t-tests are used to compare the means of two groups. There are different types of t-tests for independent samples (unpaired t-test) and related samples (paired t-test).

-

ANOVA: ANOVA is used to compare the means of two or more groups. While ANOVA can be used to compare two groups, it is more commonly used when there are three or more groups.

10.2. Type of Comparison

-

t-Tests: t-tests are designed for pairwise comparisons between two groups.

-

ANOVA: ANOVA provides an overall test of whether there are any significant differences between the means of the groups. If the ANOVA result is significant, post-hoc tests are needed to determine which specific groups differ from each other.

10.3. Error Rate

-

t-Tests: When comparing more than two groups, using multiple t-tests can inflate the Type I error rate (i.e., the probability of falsely rejecting the null hypothesis).

-

ANOVA: ANOVA controls the overall Type I error rate, making it more appropriate when comparing three or more groups.

10.4. Complexity

-

t-Tests: t-tests are relatively simple to perform and interpret.

-

ANOVA: ANOVA can be more complex, especially when dealing with factorial designs (i.e., designs with multiple independent variables) or repeated measures.

11. Common Mistakes to Avoid When Using ANOVA

To ensure accurate and reliable results, it’s important to avoid common mistakes when using ANOVA. Here are some pitfalls to watch out for:

11.1. Violating Assumptions

- Ignoring Assumptions: Failing to check and address violations of ANOVA assumptions can lead to inaccurate results. Always verify that the assumptions of independence, normality, and homogeneity of variance are met.

11.2. Misinterpreting Results

-

Overgeneralizing Results: ANOVA only indicates whether there is a significant difference between the group means. It does not tell you which specific groups differ from each other. Always perform post-hoc tests to identify the specific group differences.

-

Confusing Statistical Significance with Practical Significance: A statistically significant result does not necessarily mean that the difference is practically meaningful. Consider the effect size and the context of the research when interpreting the results.

11.3. Using the Wrong Type of ANOVA

- Applying the Wrong Test: Using the wrong type of ANOVA for the experimental design can lead to incorrect conclusions. Choose the appropriate type of ANOVA based on the number of independent variables, the type of samples (independent or related), and the number of dependent variables.

11.4. Not Controlling for Confounding Variables

- Ignoring Confounding Variables: Failing to control for confounding variables can bias the results of the ANOVA. Identify and control for any variables that may influence the relationship between the independent and dependent variables.

12. Future Trends in ANOVA

As statistical methods continue to evolve, several future trends are emerging in the use of ANOVA. These trends include:

12.1. Bayesian ANOVA

- Bayesian ANOVA: This approach incorporates prior information into the analysis, providing a more nuanced and informative result. Bayesian ANOVA allows researchers to estimate the probability of different hypotheses, rather than simply rejecting or failing to reject the null hypothesis.

12.2. Robust ANOVA Methods

- Robust ANOVA: These methods are less sensitive to violations of assumptions and outliers, providing more reliable results when dealing with non-ideal data. Robust ANOVA techniques, such as trimmed means and M-estimators, can be used to reduce the impact of outliers and non-normality.

12.3. Integration with Machine Learning

- Machine Learning Integration: Integrating ANOVA with machine learning techniques can provide more powerful and predictive models. For example, ANOVA can be used to identify the most important variables to include in a machine learning model.

12.4. Advanced Visualization Techniques

- Advanced Visualization: Using advanced visualization techniques to explore and present ANOVA results can enhance understanding and communication. Techniques such as box plots, violin plots, and interaction plots can provide valuable insights into the data and the relationships between variables.

13. Conclusion: ANOVA as a Powerful Tool for Comparing Means

ANOVA is a powerful and versatile statistical tool for comparing the means of two or more groups. By analyzing the variance within and between groups, ANOVA can determine whether there are any statistically significant differences between the group means. While ANOVA is a valuable tool, it’s important to understand its assumptions, limitations, and potential pitfalls. By following best practices and avoiding common mistakes, researchers and analysts can use ANOVA to gain valuable insights and make informed decisions. Remember, ANOVA helps you understand if observed differences are real or just random chance.

Are you struggling to compare multiple options and make informed decisions? Visit COMPARE.EDU.VN for comprehensive, objective comparisons that simplify your decision-making process. Our detailed analyses provide clear insights into the pros and cons of various products, services, and ideas, helping you choose what’s best for your needs.

For further assistance, contact us at:

Address: 333 Comparison Plaza, Choice City, CA 90210, United States

WhatsApp: +1 (626) 555-9090

Website: compare.edu.vn

FAQ: Understanding ANOVA

1. What is ANOVA used for?

ANOVA is used to compare the means of two or more groups to determine if there are statistically significant differences between them.

2. What are the main assumptions of ANOVA?

The main assumptions of ANOVA are independence of observations, normality of data, and homogeneity of variance.

3. What is the difference between one-way ANOVA and two-way ANOVA?

One-way ANOVA compares the means of groups based on one independent variable, while two-way ANOVA examines the effects of two independent variables on a dependent variable.

4. What are post-hoc tests and why are they needed?

Post-hoc tests are used after a significant ANOVA result to determine which specific groups differ significantly from each other.

5. What is the F-statistic in ANOVA?

The F-statistic is the ratio of between-group variance to within-group variance, used to determine if there is a significant difference between group means.

6. How do you interpret the p-value in ANOVA?

If the p-value is less than the significance level (alpha), you reject the null hypothesis, indicating a statistically significant difference between the group means.

7. What is homogeneity of variance and why is it important?

Homogeneity of variance means that the variances of the groups being compared should be approximately equal. Violations can lead to inaccurate ANOVA results.

8. What are some alternatives to ANOVA if the assumptions are violated?

Alternatives include data transformations, non-parametric tests like the Kruskal-Wallis test, and Welch’s ANOVA.

9. Can ANOVA be used for related samples?

Yes, repeated measures ANOVA is used when the same subjects are measured under different conditions or at different points in time.

10. What is effect size in ANOVA and why is it important?

Effect size measures the proportion of variance in the dependent variable explained by the independent variable, indicating the practical significance of the results.