In the realm of statistical analysis, understanding and interpreting p-values is crucial for drawing meaningful conclusions from data. Can you compare p values? This is a common question among researchers, students, and data enthusiasts alike. At COMPARE.EDU.VN, we provide the insights and comparisons you need to make informed decisions. Comparing p-values requires careful consideration of the context, the underlying hypotheses, and the limitations of statistical significance. This comprehensive guide aims to clarify the intricacies of p-value comparisons, offering practical advice and clear explanations to help you navigate this complex topic.

1. Understanding P-Values: The Foundation of Comparison

1.1. Defining the P-Value

The p-value, short for probability value, is a fundamental concept in statistical hypothesis testing. It quantifies the probability of obtaining test results as extreme as, or more extreme than, the results actually observed, assuming that the null hypothesis is true. In simpler terms, the p-value helps you determine the strength of the evidence against the null hypothesis. The smaller the p-value, the stronger the evidence against the null hypothesis. Understanding hypothesis testing and statistical significance are also relevant to interpreting p-values.

1.2. The Null Hypothesis: A Critical Context

The null hypothesis is a statement of no effect or no difference. It is the starting point for hypothesis testing. For instance, in a clinical trial comparing a new drug to a placebo, the null hypothesis might be that there is no difference in effectiveness between the two treatments. The p-value helps you assess whether the data provide enough evidence to reject this null hypothesis. Significance level and statistical power are important considerations when formulating null hypotheses.

1.3. Interpreting P-Values: What They Tell You

A small p-value (typically ≤ 0.05) suggests that the observed data are unlikely to have occurred if the null hypothesis were true. This leads to the rejection of the null hypothesis in favor of the alternative hypothesis. Conversely, a large p-value (typically > 0.05) suggests that the observed data are consistent with the null hypothesis, and you fail to reject it. However, it’s crucial to remember that failing to reject the null hypothesis does not mean it is true; it simply means there isn’t enough evidence to reject it. Effect size and confidence intervals provide additional context for interpreting p-values.

Understanding P-Values

Understanding P-Values

1.4. Limitations of P-Values: What They Don’t Tell You

While p-values are valuable, they have limitations. A p-value does not indicate the size or importance of an effect. A statistically significant result (small p-value) may not be practically significant if the effect size is small. Additionally, p-values are influenced by sample size; larger samples can produce smaller p-values even for small effects. Misinterpreting p-values can lead to incorrect conclusions, emphasizing the need for a comprehensive understanding. Bayesian statistics and likelihood ratios offer alternative approaches to hypothesis testing.

2. Can You Directly Compare P-Values? Navigating the Complexities

2.1. The Short Answer: It’s Complicated

Directly comparing p-values from different studies or tests is generally not recommended without careful consideration. P-values are context-dependent, influenced by sample size, study design, and the specific hypotheses being tested. A smaller p-value in one study does not necessarily mean that the effect is larger or more important than in another study with a larger p-value. Statistical assumptions and data quality also play a significant role in the validity of p-values.

2.2. When Comparisons Might Be Meaningful

In specific scenarios, comparing p-values can provide some insights. For instance, if two studies use the same methodology, sample size, and test the same hypothesis, comparing p-values might offer a relative sense of the strength of evidence. However, even in these cases, it’s essential to consider other factors such as effect size, confidence intervals, and the overall consistency of results. Meta-analysis provides a more robust approach to combining evidence from multiple studies.

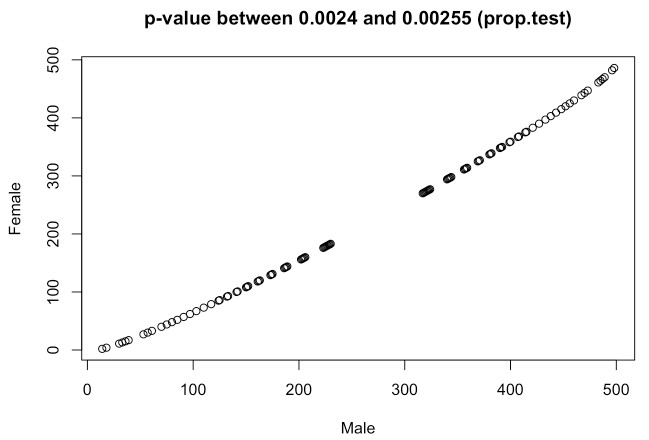

2.3. Factors Influencing P-Values: Beyond the Hypothesis

Several factors can influence p-values, making direct comparisons challenging. Sample size is a critical factor; larger samples tend to yield smaller p-values. The variability of the data also affects p-values; higher variability can lead to larger p-values. The choice of statistical test and the assumptions underlying the test can also impact the resulting p-value. Addressing confounding variables and controlling for bias are crucial for reliable p-values.

2.4. The Importance of Effect Size: A Complementary Measure

Effect size measures the magnitude of an effect, providing a more direct and interpretable measure than p-values. Common effect size measures include Cohen’s d for differences between means, Pearson’s r for correlations, and odds ratios for categorical data. Comparing effect sizes across studies can provide a more meaningful comparison than comparing p-values alone. Combining effect sizes with confidence intervals offers a comprehensive view of the results.

3. Scenarios Where P-Value Comparisons Are Problematic

3.1. Different Sample Sizes: A Major Pitfall

Comparing p-values from studies with different sample sizes can be misleading. A study with a large sample size may produce a small p-value even for a small effect, while a study with a small sample size may fail to detect a large effect. It’s essential to consider the sample size when interpreting and comparing p-values. Power analysis can help determine the appropriate sample size for a study.

3.2. Varying Methodologies: Apples and Oranges

When studies use different methodologies, statistical tests, or data collection methods, comparing p-values becomes problematic. Different methods can introduce different biases and sources of error, making it difficult to draw meaningful comparisons. Standardizing methodologies and using consistent protocols can improve the comparability of results.

3.3. Different Hypotheses: Comparing Unlike Entities

If studies test different hypotheses, comparing p-values is not valid. The p-value is specific to the hypothesis being tested, and comparing p-values across different hypotheses is like comparing apples and oranges. Clearly defining the research question and hypotheses is essential for meaningful comparisons.

3.4. The File Drawer Problem: Publication Bias

The file drawer problem refers to the tendency for studies with statistically significant results to be more likely to be published than studies with non-significant results. This can lead to an overestimation of the true effect and make comparisons across studies misleading. Addressing publication bias through methods like funnel plots and trim-and-fill analysis is important for meta-analysis.

4. Best Practices for Comparing Statistical Evidence

4.1. Focus on Effect Sizes and Confidence Intervals

Instead of relying solely on p-values, focus on effect sizes and confidence intervals. Effect sizes provide a measure of the magnitude of the effect, while confidence intervals provide a range of plausible values for the effect. Comparing effect sizes and confidence intervals across studies can provide a more meaningful and informative comparison.

4.2. Conduct Meta-Analyses: Combining Evidence Systematically

Meta-analysis is a statistical technique for combining the results of multiple studies that address the same research question. Meta-analysis provides a more precise estimate of the effect size and can help identify sources of heterogeneity across studies. Following established guidelines for conducting and reporting meta-analyses ensures the validity and reliability of the results.

4.3. Consider Bayesian Approaches: An Alternative Framework

Bayesian statistics offers an alternative framework for hypothesis testing that focuses on updating beliefs in light of new evidence. Bayesian methods provide probabilities of hypotheses being true, rather than p-values, which can be easier to interpret. Bayesian approaches also allow for the incorporation of prior knowledge and can handle complex models.

4.4. Evaluate the Quality of Studies: Assessing Validity and Reliability

When comparing statistical evidence, it’s essential to evaluate the quality of the studies being compared. Assess the study design, sample size, data quality, and potential sources of bias. Only compare evidence from studies that meet acceptable standards of validity and reliability. Using quality assessment tools and checklists can help standardize the evaluation process.

5. P-Values in Different Contexts: Practical Examples

5.1. Clinical Trials: Assessing Treatment Efficacy

In clinical trials, p-values are used to assess the efficacy of new treatments. However, it’s crucial to consider the effect size and clinical significance of the results. A statistically significant result with a small effect size may not be clinically meaningful. Comparing p-values across different clinical trials requires careful consideration of the study designs, patient populations, and outcome measures.

5.2. Observational Studies: Identifying Risk Factors

In observational studies, p-values are used to identify potential risk factors for diseases or other outcomes. However, observational studies are prone to confounding and bias, which can affect the validity of p-values. It’s essential to control for confounding variables and assess the potential for bias when interpreting p-values from observational studies.

5.3. A/B Testing: Optimizing User Experiences

In A/B testing, p-values are used to compare different versions of a website or app. However, it’s important to consider the practical significance of the results. A statistically significant result may not be worth implementing if the effect is small. Monitoring key performance indicators (KPIs) and conducting follow-up analyses can help validate the results of A/B tests.

5.4. Scientific Research: Advancing Knowledge

In scientific research, p-values are used to test hypotheses and advance knowledge. However, it’s crucial to interpret p-values cautiously and consider the broader context of the research. Replication and validation of findings are essential for building a robust body of evidence. Emphasizing transparency and open science practices can improve the reliability and credibility of research findings.

6. Real-World Examples of P-Value Misinterpretation

6.1. Example 1: The Vaccine Study

Consider two studies evaluating the effectiveness of different vaccines. Study A reports a p-value of 0.01, while Study B reports a p-value of 0.04. It might seem that Vaccine A is more effective because it has a smaller p-value. However, if Study A had a much larger sample size than Study B, the smaller p-value might simply reflect the increased statistical power, not necessarily a larger effect. Additionally, if the vaccines were tested on different populations with varying health conditions, the p-values would not be directly comparable.

6.2. Example 2: The Marketing Campaign

A company runs two marketing campaigns. Campaign X yields a p-value of 0.03, and Campaign Y yields a p-value of 0.06. Based solely on these p-values, it might be tempting to conclude that Campaign X was more successful. However, if Campaign Y targeted a more niche audience with higher potential returns, the slightly higher p-value might still represent a more valuable campaign overall. Focusing on metrics such as return on investment (ROI) and customer lifetime value (CLTV) would provide a more comprehensive assessment.

6.3. Example 3: The Medical Treatment

Two different medical treatments are tested for the same condition. Treatment Alpha results in a p-value of 0.02, while Treatment Beta results in a p-value of 0.07. Without further information, it might appear that Treatment Alpha is superior. However, if Treatment Beta has fewer side effects and is less invasive, it might be the preferred option despite the slightly higher p-value. Clinical judgment and patient preferences should also be considered in the decision-making process.

7. Improving Statistical Literacy: Empowering Informed Decisions

7.1. Education and Training: Building Statistical Skills

Improving statistical literacy is crucial for making informed decisions based on data. Education and training programs can help individuals develop the skills needed to understand and interpret statistical evidence. Emphasizing critical thinking and data analysis skills can empower individuals to evaluate statistical claims and avoid common pitfalls.

7.2. Communicating Statistical Results Clearly

Communicating statistical results clearly and transparently is essential for promoting understanding and trust. Avoid using jargon and technical terms that may be confusing to non-statisticians. Focus on presenting the results in a way that is accessible and meaningful to the intended audience. Using visualizations and plain language can help convey complex statistical concepts.

7.3. Promoting Open Science Practices: Enhancing Transparency

Promoting open science practices can enhance the transparency and reproducibility of research findings. Sharing data, code, and research protocols can allow others to verify and build upon the results. Encouraging pre-registration of studies can help reduce publication bias and increase the credibility of research.

7.4. Engaging Statisticians: Seeking Expert Guidance

Engaging statisticians can provide expert guidance on the design, analysis, and interpretation of statistical studies. Statisticians can help identify potential sources of bias and ensure that the appropriate statistical methods are used. Seeking statistical advice can improve the quality and validity of research findings.

8. The Role of COMPARE.EDU.VN in Data-Driven Decision Making

8.1. Providing Comprehensive Comparisons

COMPARE.EDU.VN is dedicated to providing comprehensive and objective comparisons across a wide range of topics. Whether you’re comparing products, services, or ideas, our platform offers detailed analyses to help you make informed decisions. Our comparisons go beyond simple feature lists, delving into the underlying data and providing clear, actionable insights.

8.2. Emphasizing Data Quality and Reliability

We prioritize data quality and reliability in our comparisons. Our team of experts carefully evaluates the sources of information and ensures that the data are accurate and up-to-date. We also highlight any potential limitations or biases in the data, empowering you to make informed decisions based on a clear understanding of the evidence.

8.3. Promoting Statistical Literacy

COMPARE.EDU.VN is committed to promoting statistical literacy among our users. We provide educational resources and clear explanations of statistical concepts to help you understand the data behind our comparisons. Our goal is to empower you to critically evaluate statistical claims and make informed decisions based on evidence.

8.4. Fostering Informed Decision-Making

Ultimately, our goal is to foster informed decision-making. By providing comprehensive comparisons, emphasizing data quality, and promoting statistical literacy, we empower you to make choices that are aligned with your needs and goals. Whether you’re a student, professional, or consumer, COMPARE.EDU.VN is your trusted partner in data-driven decision making.

9. Frequently Asked Questions (FAQ) about P-Values

1. What exactly does a p-value tell me?

A p-value tells you the probability of observing results as extreme as, or more extreme than, the results you obtained, assuming that the null hypothesis is true.

2. Is a smaller p-value always better?

Not necessarily. A smaller p-value indicates stronger evidence against the null hypothesis, but it doesn’t tell you anything about the size or practical significance of the effect.

3. What is the common threshold for statistical significance?

The most common threshold is 0.05. If the p-value is less than or equal to 0.05, the result is typically considered statistically significant.

4. Does a p-value tell me the probability that my hypothesis is true?

No, a p-value only tells you the probability of the observed data given the null hypothesis is true. It does not provide direct evidence for or against your alternative hypothesis.

5. Can I compare p-values from different studies directly?

It’s generally not recommended without careful consideration. Factors like sample size, study design, and statistical tests can influence p-values, making direct comparisons misleading.

6. What should I do if my p-value is close to the significance threshold?

Consider the context of your study, the potential for bias, and the practical significance of your findings. It might be helpful to collect more data or use different statistical methods to confirm your results.

7. How does sample size affect p-values?

Larger sample sizes tend to produce smaller p-values, even for small effects. Therefore, it’s essential to consider the effect size along with the p-value, especially in studies with large samples.

8. Are there alternatives to using p-values in statistical analysis?

Yes, alternatives include effect sizes, confidence intervals, and Bayesian methods. These approaches provide additional information about the magnitude and uncertainty of the effect, which can be more informative than p-values alone.

9. What is the “file drawer problem,” and how does it affect p-values?

The “file drawer problem” refers to the tendency for studies with statistically significant results to be more likely to be published than those with non-significant results. This can lead to an overestimation of the true effect and make comparisons across studies misleading.

10. Where can I find more reliable comparisons of statistical evidence?

COMPARE.EDU.VN offers comprehensive and objective comparisons across a wide range of topics, emphasizing data quality and promoting statistical literacy to empower informed decision-making.

10. Conclusion: Making Informed Decisions with Statistical Evidence

In conclusion, while p-values are a valuable tool in statistical hypothesis testing, comparing them directly can be misleading without careful consideration of the context, methodology, and other relevant factors. Focusing on effect sizes, confidence intervals, and meta-analyses can provide a more comprehensive and informative approach to comparing statistical evidence. By improving statistical literacy and engaging expert guidance, we can empower ourselves to make informed decisions based on a clear understanding of the data. Remember, at COMPARE.EDU.VN, we’re here to help you navigate the complexities of data analysis and make the best choices for your needs.

For more comprehensive comparisons and expert insights, visit COMPARE.EDU.VN today. Our platform is designed to provide you with the information you need to make informed decisions, whether you’re comparing products, services, or ideas. Don’t leave your choices to chance—trust COMPARE.EDU.VN to help you navigate the complexities of data and make the best decisions for your needs. Contact us at 333 Comparison Plaza, Choice City, CA 90210, United States or reach out via WhatsApp at +1 (626) 555-9090. Let compare.edu.vn be your guide to data-driven decision-making.