Are Sawtooth Probability Of Choice Scores Comparable Across Studies? COMPARE.EDU.VN explores the comparability of sawtooth probability of choice scores, shedding light on their application in diverse research scenarios. Discover how these scores can offer insights for making informed decisions, improving data quality, and driving significant advancements in research methodologies. Explore the nuances of choice-based conjoint analysis, utility scores, and data cleaning techniques.

1. Understanding Sawtooth Probability of Choice Scores

Sawtooth probability of choice scores are derived from conjoint analysis, a robust market research technique used to optimize product features and pricing. Conjoint analysis involves surveying participants, asking them to make choices among various product or service configurations. These choices are then used to determine the utility scores, which reflect the preferences of the survey takers. The probability of choice score indicates the likelihood that a survey respondent will select a particular option, given their preferences.

This score is essential for researchers because it provides a quantitative measure of how likely a respondent is to choose a specific option. By analyzing these scores, researchers can infer which product features are most valued and how different pricing strategies might impact consumer choices.

The calculation of these scores involves several steps:

- Utility Score Calculation: First, utility scores are calculated for each respondent, representing their preference for different attributes and levels.

- Logit Equation Application: Next, the logit equation is used to convert these utility scores into probabilities. This equation takes into account the utility of each option relative to the others, providing a probability that each option will be chosen.

- Root Likelihood Score: The root likelihood (RLH) score is then calculated, indicating the probability that a respondent would have made the selections they did, given their utility scores. This score serves a similar role to R-squared in regression, offering a measure of statistical fit.

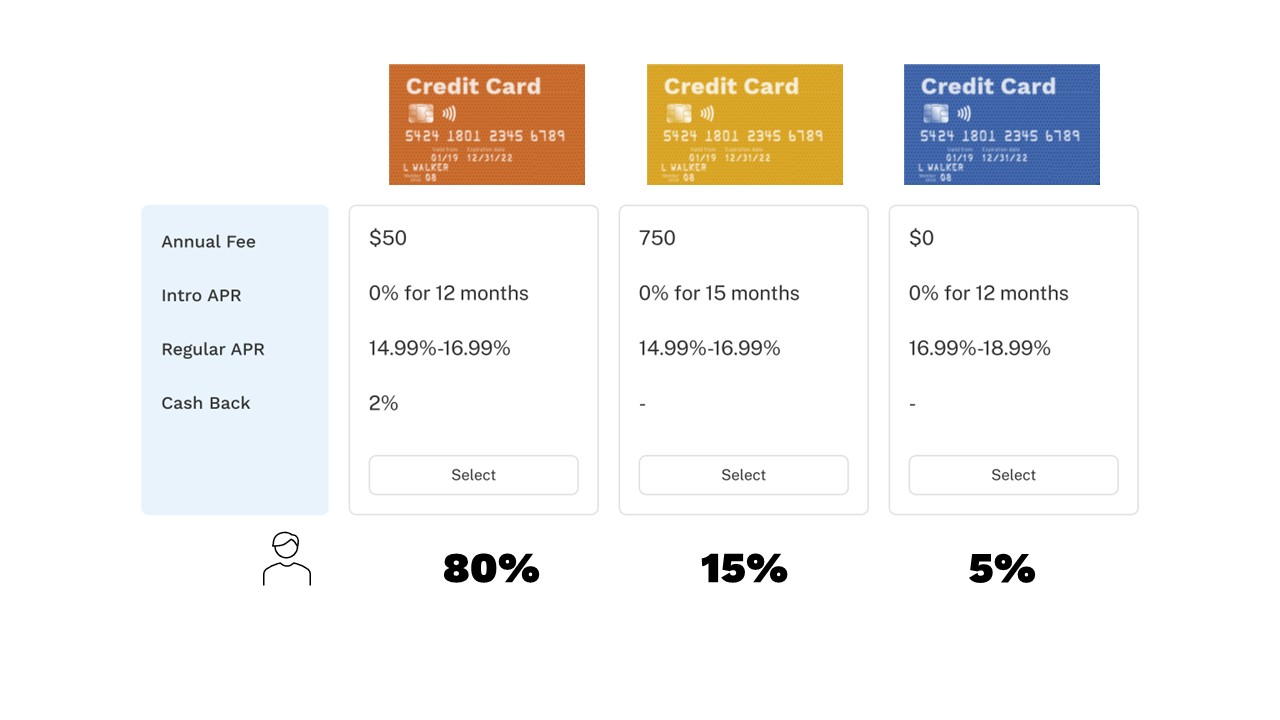

Example Scenario: Imagine a survey respondent is presented with three product options (A, B, and C) in a conjoint exercise. After calculating the utility scores, it is determined that option A has an 80% probability of being selected, option B has 15%, and option C has 5%. If the respondent chooses option A, their root likelihood score would be 0.8, significantly higher than the 33% chance probability if there were no preferences.

The root likelihood score is crucial for identifying high-quality data. A high score indicates that the respondent’s choices align with their stated preferences, while a low score might suggest that the respondent was not carefully considering the options.

2. The Importance of Data Quality in Conjoint Analysis

Data quality is paramount in conjoint analysis because the insights derived from this technique directly influence strategic decisions such as product development, pricing, and marketing. If the data is of poor quality, the resulting recommendations can be misleading, leading to potentially costly errors.

2.1 Consequences of Poor Data Quality

Poor data quality can arise from various sources, including:

- Random Responding: Survey takers might click through the survey quickly and randomly, without carefully considering the options.

- Lack of Attention: Respondents may not fully understand the questions or may lose focus during the survey.

- Bias: Respondents might intentionally skew their answers due to social desirability or other biases.

The consequences of using poor quality data include:

- Inaccurate Willingness to Pay Estimates: Incorrectly estimating how much consumers are willing to pay for enhanced features.

- Overestimation of Preference for Low-Quality Products: Misjudging the appeal of inferior products due to skewed data.

- Flawed Product Development Decisions: Making strategic errors in product design and feature selection based on unreliable data.

- Ineffective Marketing Strategies: Developing marketing campaigns that fail to resonate with the target audience because they are based on inaccurate consumer preferences.

2.2 Identifying and Mitigating Poor Data Quality

Several methods can be used to identify and mitigate poor data quality in conjoint analysis:

- Completion Time Analysis: Monitoring the time taken by survey takers to complete the choice tasks. Those who complete the survey too quickly may be flagged for further investigation.

- Root Likelihood Score Analysis: Calculating and analyzing the root likelihood scores to identify respondents whose choices appear random or inconsistent with their stated preferences.

- Attention Checks: Including attention check questions within the survey to identify respondents who are not paying attention.

- Data Cleaning Techniques: Implementing data cleaning procedures to remove or correct responses that are deemed unreliable.

By actively monitoring and addressing data quality issues, researchers can ensure that their conjoint analysis results are accurate and reliable, leading to better informed decisions.

2.3 Techniques for Improving Data Quality

To enhance the quality of data in conjoint studies, consider the following techniques:

- Clear Instructions: Provide clear and concise instructions to survey takers to ensure they understand the task.

- Engaging Design: Use an engaging survey design to maintain respondents’ attention and interest.

- Pilot Testing: Conduct pilot tests to identify and address any potential issues with the survey design or questions.

- Respondent Screening: Screen respondents to ensure they meet the target demographic and are knowledgeable about the product category.

- Incentives: Offer appropriate incentives to encourage respondents to provide thoughtful and honest answers.

Addressing data quality issues is a critical step in ensuring that conjoint analysis results are trustworthy and actionable. COMPARE.EDU.VN provides comprehensive comparisons and resources to help researchers and businesses make informed decisions based on high-quality data. Contact us at 333 Comparison Plaza, Choice City, CA 90210, United States, or Whatsapp: +1 (626) 555-9090, or visit our website COMPARE.EDU.VN for more information.

Choice Based Conjoint task with three credit card concepts

Choice Based Conjoint task with three credit card concepts

3. Root Likelihood: A Statistical Fit Metric Explained

The root likelihood (RLH) score is a critical metric in conjoint analysis, serving as an indicator of how well a respondent’s choices align with their expressed preferences. It quantifies the probability that a survey participant would make the selections they did, given their individual utility scores. In essence, it measures the statistical fit of the respondent’s choices to their preferences.

3.1 Understanding Root Likelihood

The root likelihood score can be understood through a simple example. Suppose a respondent is presented with three product options: A, B, and C. If each option were equally likely to be chosen (i.e., no preference), the probability of selecting any one option would be 1/3, or approximately 33%. However, respondents typically have preferences, and conjoint analysis aims to uncover these preferences.

Once utility scores are calculated for each respondent, reflecting their preference for various attributes and levels, the logit equation is used to convert these scores into probabilities. For instance, if the utility scores suggest that option A has an 80% probability of being selected, option B has a 15% probability, and option C has a 5% probability, the root likelihood score for a respondent who chooses option A would be 0.8. This is significantly higher than the 33% probability that would be expected by random chance, indicating that the respondent’s choice aligns well with their stated preferences.

3.2 Calculation of Root Likelihood

In surveys where respondents complete multiple choice tasks, each with varying product options and probabilities, calculating the root likelihood score involves additional steps. The process entails:

- Utility Score Calculation: Determine the utility scores for each respondent based on their choices across all conjoint tasks.

- Probability Estimation: Use the logit equation to estimate the probability of selecting each option in each choice task, based on the respondent’s utility scores.

- Geometric Mean Calculation: Calculate the geometric mean of the probabilities across all choice tasks. This geometric mean is the respondent’s root likelihood score.

The geometric mean is used because it is less sensitive to extreme values and provides a more stable measure of overall fit. It is calculated as the nth root of the product of n probabilities.

3.3 Interpreting Root Likelihood Scores

The root likelihood score ranges from 0 to 1, with higher scores indicating a better fit between the respondent’s choices and their preferences. A score of 1 indicates a perfect fit, meaning the respondent always chose the option with the highest probability based on their utility scores. A score close to 0 suggests that the respondent’s choices were random or inconsistent with their preferences.

Interpreting the root likelihood score involves comparing it to a benchmark or threshold. One common approach is to compare the scores of real respondents to those of randomly generated “bots” or simulated respondents who have no preferences. By analyzing the distribution of root likelihood scores for these random respondents, a cutoff score can be established to identify respondents whose choices appear to be random.

3.4 Using Root Likelihood to Identify Poor Quality Data

Root likelihood scores are valuable for identifying poor quality survey responses, particularly those from respondents who may have randomly clicked through the choice tasks. By setting a cutoff score based on the distribution of scores from random respondents, researchers can flag real respondents whose scores fall below this threshold.

The steps for using root likelihood to identify poor quality data include:

- Generate Random Respondents: Create a dataset of random respondents by simulating survey responses without any underlying preferences.

- Run Conjoint Analysis: Perform a conjoint analysis on the combined dataset of real and random respondents, using a hierarchical Bayesian (HB) methodology to estimate utility scores for each respondent.

- Calculate Root Likelihood Scores: Calculate the root likelihood scores for all respondents, including the random respondents.

- Establish a Cutoff Score: Determine a cutoff score based on the distribution of root likelihood scores for the random respondents. A common approach is to use the 80th percentile score of the random respondents as the cutoff.

- Flag Poor Quality Responses: Flag any real respondents whose root likelihood scores fall below the cutoff score. These respondents are likely to have provided random or inconsistent responses and may be removed from the dataset.

By using root likelihood scores in this way, researchers can improve the overall quality of their data and ensure that their conjoint analysis results are more reliable.

3.5 Enhancing Data Quality with Root Likelihood

Root likelihood scores serve as a robust tool for data cleaning, enabling researchers to identify and eliminate unreliable responses. This process enhances the overall data quality, leading to more accurate and actionable insights. COMPARE.EDU.VN advocates for the use of such metrics to ensure the integrity of research findings. Contact us at 333 Comparison Plaza, Choice City, CA 90210, United States, or Whatsapp: +1 (626) 555-9090, or visit our website COMPARE.EDU.VN for expert guidance on data analysis.

4. Identifying Poor Quality Survey Responses with Root Likelihood

The root likelihood (RLH) metric is an invaluable tool for identifying poor-quality survey responses in conjoint analysis. By understanding how to calculate and interpret RLH scores, researchers can effectively flag respondents who may have randomly clicked through the choice tasks, thereby improving the overall integrity of the data.

4.1 Generating a Random Respondent Dataset

The first step in using RLH to identify poor-quality responses involves creating a dataset of random respondents. These “bots” are simulated survey takers who have no preference for any options, allowing researchers to establish a baseline for what random responding looks like in their particular survey.

Generating a random respondent dataset typically involves the following steps:

- Simulate Survey Responses: Use software or algorithms to generate survey responses without regard to any underlying preferences. This can be done by randomly selecting options in each choice task.

- Ensure Representativeness: Make sure that the random responses are representative of the structure and format of the actual survey. This includes using the same number of choice tasks and the same set of product options.

- Create a Separate Dataset: Store the random responses in a separate dataset, distinct from the dataset of real survey responses.

Software tools like Sawtooth Software’s Lighthouse Studio can be used to automate this process, making it easy to generate a large number of random respondents with just a few clicks.

4.2 Running Conjoint Analysis on the Combined Dataset

Once the random respondent dataset has been created, the next step is to run a conjoint analysis on the combined dataset of real and random respondents. This involves using a statistical technique, such as hierarchical Bayesian (HB) analysis, to estimate utility scores for each respondent.

Hierarchical Bayesian analysis is particularly well-suited for this task because it can handle individual-level data while also borrowing information across respondents to improve the accuracy of the estimates. This is important because it allows researchers to estimate utility scores even for the random respondents, who have no underlying preferences.

When running the conjoint analysis, it is important to:

- Use Appropriate Software: Choose a software package that is designed for conjoint analysis and that supports hierarchical Bayesian estimation.

- Specify Model Parameters: Carefully specify the model parameters, such as the number of iterations and the convergence criteria, to ensure that the analysis converges to a stable solution.

- Check Model Fit: Evaluate the overall fit of the model to the data, using metrics such as the root mean squared error (RMSE) or the Bayesian information criterion (BIC).

4.3 Calculating Root Likelihood Scores

After running the conjoint analysis, the next step is to calculate the root likelihood (RLH) scores for all respondents, including the random respondents. This involves using the utility scores estimated from the conjoint analysis to calculate the probability of each respondent selecting each option in each choice task.

The root likelihood score is calculated as the geometric mean of the probabilities across all choice tasks. Specifically, for each respondent:

- Calculate Choice Probabilities: Use the logit equation to calculate the probability of selecting each option in each choice task, based on the respondent’s utility scores.

- Multiply Probabilities: Multiply the probabilities together across all choice tasks.

- Take the Nth Root: Take the nth root of the product, where n is the number of choice tasks.

The resulting value is the respondent’s root likelihood score.

4.4 Establishing a Cutoff Score

Once the root likelihood scores have been calculated, the next step is to establish a cutoff score to identify respondents who may have randomly clicked through the choice tasks. This cutoff score is typically based on the distribution of RLH scores for the random respondents.

A common approach is to use the 80th percentile RLH score for the random respondents as the cutoff. This means that any real respondent whose RLH score is lower than the 80th percentile of the random respondents is flagged as potentially providing random responses.

The rationale for using the 80th percentile is that it strikes a balance between identifying a reasonable number of poor-quality responses while also avoiding the risk of falsely flagging too many legitimate responses. However, the appropriate cutoff score may vary depending on the specific survey and the characteristics of the respondent population.

4.5 Flagging and Removing Poor Quality Responses

The final step in the process is to flag and remove any real respondents whose RLH scores fall below the cutoff score. These respondents are considered to have provided responses that are too similar to those of random respondents, suggesting that they may not have carefully considered the options in the choice tasks.

Before removing these respondents from the dataset, it is important to:

- Review the Data: Examine the responses of the flagged respondents to look for any other signs of poor-quality data, such as inconsistent responses or straight-lining.

- Consider the Impact: Consider the impact of removing these respondents on the overall results of the analysis. If removing a large number of respondents, it may be necessary to re-evaluate the survey design or data collection procedures.

- Document the Process: Document the process of identifying and removing poor-quality responses, including the rationale for the cutoff score and any other criteria used.

By following these steps, researchers can effectively use the root likelihood metric to identify and remove poor-quality survey responses, thereby improving the overall integrity of their data.

4.6 Ensuring Accurate Analysis

By carefully identifying and removing poor-quality survey responses, you can ensure that your conjoint analysis is based on reliable data. COMPARE.EDU.VN emphasizes the importance of thorough data cleaning to enhance the accuracy of analytical results. Contact us at 333 Comparison Plaza, Choice City, CA 90210, United States, or Whatsapp: +1 (626) 555-9090, or visit our website COMPARE.EDU.VN for comprehensive assistance with data analysis and interpretation.

5. Comparability of Sawtooth Probability of Choice Scores Across Studies

The question of whether sawtooth probability of choice scores are comparable across studies is complex and depends on several factors. While these scores provide valuable insights within a single study, comparing them across different studies requires careful consideration.

5.1 Factors Affecting Comparability

Several factors can affect the comparability of sawtooth probability of choice scores across studies:

- Study Design: Differences in study design, such as the number of attributes, the number of levels per attribute, and the type of choice task (e.g., choice-based conjoint, adaptive conjoint), can significantly impact the resulting scores.

- Respondent Population: Variations in the characteristics of the respondent population, such as demographics, product category knowledge, and purchase behavior, can also affect the scores.

- Survey Instrument: Differences in the wording of the survey questions, the presentation of the choice tasks, and the use of visual aids can influence how respondents perceive the options and, consequently, their choices.

- Data Collection Methods: Variations in data collection methods, such as online surveys, in-person interviews, or mobile surveys, can introduce biases that affect the comparability of the scores.

- Analytical Techniques: Differences in the analytical techniques used to estimate utility scores and calculate choice probabilities can also impact the comparability of the scores.

5.2 Standardizing Scores for Comparison

To improve the comparability of sawtooth probability of choice scores across studies, researchers can employ several standardization techniques:

- Normalization: Normalizing the scores to a common scale, such as 0 to 1 or -1 to 1, can help to remove the effects of differences in the range of the scores.

- Z-Score Transformation: Transforming the scores to z-scores, which represent the number of standard deviations from the mean, can help to account for differences in the distribution of the scores.

- Benchmarking: Benchmarking the scores against a common reference point, such as a competitor’s product or a baseline scenario, can provide a more meaningful comparison.

- Calibration: Calibrating the scores using external data, such as market share data or sales data, can help to improve the accuracy and comparability of the scores.

5.3 Contextual Considerations

Even with standardization, it is essential to consider the contextual factors that may affect the comparability of the scores. These factors include:

- Market Conditions: Changes in market conditions, such as economic growth, competitive intensity, and regulatory environment, can affect consumer preferences and, consequently, the scores.

- Product Life Cycle: The stage of the product life cycle, such as introduction, growth, maturity, or decline, can also influence consumer preferences and the scores.

- Cultural Differences: Cultural differences in values, beliefs, and attitudes can affect how respondents perceive the options and, consequently, their choices.

5.4 Best Practices for Comparing Scores

When comparing sawtooth probability of choice scores across studies, researchers should follow these best practices:

- Document Study Details: Thoroughly document the study design, respondent population, survey instrument, data collection methods, and analytical techniques used in each study.

- Assess Data Quality: Assess the quality of the data in each study, using techniques such as completion time analysis, root likelihood score analysis, and attention checks.

- Standardize Scores: Standardize the scores using appropriate normalization, transformation, or calibration techniques.

- Consider Contextual Factors: Carefully consider the contextual factors that may affect the comparability of the scores.

- Interpret Results Cautiously: Interpret the results cautiously, recognizing the limitations of comparing scores across different studies.

5.5 Combining Data Across Studies

In some cases, it may be possible to combine data across multiple studies to increase the statistical power of the analysis. However, this requires careful consideration of the factors affecting comparability and the use of appropriate statistical techniques to account for differences in study design, respondent population, and survey instrument.

Techniques such as hierarchical modeling and meta-analysis can be used to combine data across studies while accounting for heterogeneity. However, these techniques require a deep understanding of the underlying statistical assumptions and the potential for bias.

5.6 Seeking Expert Guidance

Comparing sawtooth probability of choice scores across studies can be challenging, and it is often helpful to seek guidance from experts in conjoint analysis and market research. These experts can provide valuable insights into the factors affecting comparability and recommend appropriate standardization and analytical techniques.

COMPARE.EDU.VN offers expert guidance on comparing data across studies. Our team can assist you in interpreting results accurately. Contact us at 333 Comparison Plaza, Choice City, CA 90210, United States, or Whatsapp: +1 (626) 555-9090, or visit our website COMPARE.EDU.VN for comprehensive support.

6. Ensuring Data Integrity in Conjoint Studies

Ensuring data integrity in conjoint studies is vital for obtaining reliable and valid results. Researchers must implement various quality control measures throughout the research process to minimize errors and biases.

6.1 Implementing Quality Control Measures

Several quality control measures can be implemented at different stages of a conjoint study:

- Survey Design:

- Clear and Concise Questions: Use clear and concise language in the survey questions to minimize confusion and ambiguity.

- Balanced Scales: Use balanced scales to avoid biasing responses in one direction.

- Randomization: Randomize the order of the choice tasks and the options within each task to minimize order effects.

- Attention Checks: Include attention check questions to identify respondents who are not paying attention.

- Respondent Screening:

- Targeted Recruitment: Recruit respondents who meet the target demographic and are knowledgeable about the product category.

- Screening Questions: Use screening questions to ensure that respondents meet the eligibility criteria.

- Quota Sampling: Use quota sampling to ensure that the sample is representative of the population.

- Data Collection:

- Pilot Testing: Conduct pilot tests to identify and address any potential issues with the survey design or data collection procedures.

- Monitoring Completion Rates: Monitor completion rates to identify any potential problems with the survey.

- Data Validation: Validate the data to ensure that it is complete and accurate.

- Data Analysis:

- Data Cleaning: Clean the data to remove or correct any errors or inconsistencies.

- Outlier Detection: Detect and remove outliers that may distort the results.

- Statistical Checks: Perform statistical checks to assess the validity and reliability of the data.

6.2 Minimizing Errors and Biases

In addition to implementing quality control measures, researchers should also take steps to minimize errors and biases throughout the research process:

- Response Bias:

- Anonymity: Ensure that respondents are anonymous to reduce social desirability bias.

- Neutral Language: Use neutral language in the survey questions to avoid leading respondents in one direction.

- Counterbalancing: Counterbalance the order of the questions to minimize order effects.

- Sampling Bias:

- Random Sampling: Use random sampling to ensure that the sample is representative of the population.

- Weighting: Use weighting to adjust for any imbalances in the sample.

- Stratification: Use stratification to ensure that the sample is representative of different subgroups in the population.

- Measurement Error:

- Reliable Measures: Use reliable measures to minimize measurement error.

- Multiple Measures: Use multiple measures to assess the same construct.

- Calibration: Calibrate the measures to improve their accuracy.

6.3 Validating Conjoint Results

To ensure the validity of conjoint results, researchers should consider the following:

- Internal Validity: Assess the internal validity of the results by examining the consistency of the findings across different parts of the survey.

- External Validity: Assess the external validity of the results by comparing the findings to external data, such as market share data or sales data.

- Predictive Validity: Assess the predictive validity of the results by using the findings to predict future behavior.

By implementing quality control measures, minimizing errors and biases, and validating the results, researchers can ensure that their conjoint studies produce reliable and valid findings.

6.4 Comprehensive Data Analysis

Data integrity is paramount for accurate conjoint analysis. COMPARE.EDU.VN is committed to providing thorough and reliable data analysis services to ensure the integrity of your research. Contact us at 333 Comparison Plaza, Choice City, CA 90210, United States, or Whatsapp: +1 (626) 555-9090, or visit our website COMPARE.EDU.VN for more details.

7. The Role of Conjoint Analysis in Decision-Making

Conjoint analysis plays a critical role in decision-making across various industries, providing valuable insights into consumer preferences and guiding strategic choices.

7.1 Applications Across Industries

Conjoint analysis is used in a wide range of industries, including:

- Consumer Goods: Optimizing product features, pricing, and packaging.

- Healthcare: Designing new medical treatments, developing effective communication strategies, and assessing patient preferences.

- Financial Services: Designing new financial products, determining optimal pricing strategies, and evaluating customer preferences.

- Hospitality: Optimizing hotel amenities, pricing strategies, and service offerings.

- Automotive: Optimizing vehicle features, pricing, and design.

- Technology: Optimizing software features, pricing strategies, and user interfaces.

7.2 Guiding Strategic Choices

Conjoint analysis helps guide strategic choices by:

- Identifying Key Product Features: Determining which product features are most important to consumers.

- Optimizing Pricing Strategies: Determining the optimal pricing strategy to maximize revenue and profitability.

- Evaluating New Product Concepts: Assessing the potential success of new product concepts before launch.

- Understanding Competitive Positioning: Understanding how a product compares to competitors in terms of features, pricing, and overall value.

- Segmenting the Market: Identifying different segments of consumers with different preferences.

7.3 Maximizing Revenue and Profitability

By providing insights into consumer preferences, conjoint analysis helps businesses maximize revenue and profitability by:

- Designing Products That Meet Consumer Needs: Creating products that are tailored to the needs and preferences of the target market.

- Pricing Products Effectively: Pricing products at a level that maximizes revenue and profitability while remaining competitive.

- Targeting Marketing Efforts: Targeting marketing efforts to the segments of consumers who are most likely to be interested in the product.

- Improving Customer Satisfaction: Improving customer satisfaction by providing products and services that meet their needs and expectations.

7.4 Enhancing Decision-Making Processes

Conjoint analysis enhances decision-making processes by providing:

- Data-Driven Insights: Providing data-driven insights that are based on consumer preferences rather than intuition or guesswork.

- Objective Evaluation: Providing an objective evaluation of different options, allowing decision-makers to compare the pros and cons of each option.

- Quantifiable Results: Providing quantifiable results that can be used to track progress and measure success.

- Improved Communication: Improving communication among stakeholders by providing a common understanding of consumer preferences.

7.5 Informing Product Development

Conjoint analysis is invaluable for informing product development decisions, ensuring new products align with consumer needs and preferences. COMPARE.EDU.VN assists businesses in leveraging conjoint analysis for product optimization. Contact us at 333 Comparison Plaza, Choice City, CA 90210, United States, or Whatsapp: +1 (626) 555-9090, or visit our website COMPARE.EDU.VN for expert insights.

8. Overcoming Limitations of Sawtooth Probability of Choice Scores

While sawtooth probability of choice scores are a powerful tool for understanding consumer preferences, they have limitations that researchers should be aware of.

8.1 Acknowledging Limitations

Some of the key limitations of sawtooth probability of choice scores include:

- Context Dependence: The scores are context-dependent and may not generalize to different situations or market conditions.

- Simplification of Reality: The scores are based on a simplified representation of reality and may not capture all of the factors that influence consumer behavior.

- Assumptions: The scores are based on certain assumptions, such as the assumption that consumers are rational and make choices based on their preferences.

- Data Quality: The scores are sensitive to data quality and may be affected by errors or biases in the data.

- Complexity: The analysis can be complex and require specialized expertise.

8.2 Strategies for Mitigation

Several strategies can be used to mitigate the limitations of sawtooth probability of choice scores:

- Use Multiple Methods: Use multiple methods to validate the findings and provide a more complete picture of consumer preferences.

- Consider Contextual Factors: Consider the contextual factors that may affect the scores and interpret the results accordingly.

- Test Assumptions: Test the assumptions underlying the scores and adjust the analysis as needed.

- Ensure Data Quality: Ensure data quality by implementing quality control measures and cleaning the data.

- Seek Expert Guidance: Seek expert guidance to ensure that the analysis is conducted correctly and the results are interpreted appropriately.

8.3 Combining with Other Data

Combining sawtooth probability of choice scores with other data sources can provide a more comprehensive understanding of consumer behavior. Some data sources that can be combined with conjoint analysis data include:

- Demographic Data: Combining demographic data with conjoint analysis data can help to identify different segments of consumers with different preferences.

- Purchase Data: Combining purchase data with conjoint analysis data can help to validate the results and predict future behavior.

- Social Media Data: Combining social media data with conjoint analysis data can provide insights into consumer attitudes and perceptions.

- Market Research Data: Combining market research data with conjoint analysis data can provide a more complete picture of the market landscape.

8.4 The Importance of Continuous Validation

The validity of sawtooth probability of choice scores should be continuously validated by comparing the findings to external data and monitoring the results over time. This can help to ensure that the scores remain accurate and relevant.

8.5 Seeking External Validation

External validation is crucial for strengthening the reliability of conjoint analysis findings. COMPARE.EDU.VN advocates for incorporating external data sources to enhance the validity of your research. Contact us at 333 Comparison Plaza, Choice City, CA 90210, United States, or Whatsapp: +1 (626) 555-9090, or visit our website COMPARE.EDU.VN for assistance with integrating diverse data sources.

9. Case Studies: Applying Sawtooth Probability of Choice Scores

Examining case studies that demonstrate the application of sawtooth probability of choice scores in real-world scenarios can provide valuable insights into their practical use and benefits.

9.1 Case Study 1: Optimizing Product Features in the Automotive Industry

A major automotive manufacturer used conjoint analysis to optimize the features of a new car model. The study involved surveying potential customers about their preferences for various features, such as engine type, fuel efficiency, safety features, and infotainment systems.

The results of the conjoint analysis showed that safety features and fuel efficiency were the most important factors influencing consumer preferences. Based on these findings, the manufacturer decided to prioritize these features in the design of the new car model.

The manufacturer also used the conjoint analysis results to determine the optimal pricing strategy for the new car model. By pricing the car competitively while still offering the features that were most important to consumers, the manufacturer was able to maximize revenue and profitability.

9.2 Case Study 2: Designing Effective Communication Strategies in Healthcare

A healthcare organization used conjoint analysis to design effective communication strategies for promoting a new medical treatment. The study involved surveying patients about their preferences for different communication channels, message content, and delivery styles.

The results of the conjoint analysis showed that patients preferred to receive information about the new treatment from their doctor, using a clear and concise message that emphasized the benefits of the treatment. Based on these findings, the healthcare organization developed a communication strategy that focused on educating doctors about the new treatment and providing them with materials to share with their patients.

The healthcare organization also used the conjoint analysis results to tailor the communication strategy to different segments of patients. By understanding the preferences of different patient groups, the organization was able to develop more effective communication strategies that resonated with each group.

9.3 Case Study 3: Optimizing Hotel Amenities in the Hospitality Industry

A major hotel chain used conjoint analysis to optimize the amenities offered to guests. The study involved surveying guests about their preferences for various amenities, such as room size, Wi-Fi access, breakfast options, and fitness facilities.

The results of the conjoint analysis showed that Wi-Fi access and breakfast options were the most important factors influencing guest satisfaction. Based on these findings, the hotel chain decided to invest in improving Wi-Fi access and offering a wider variety of breakfast options.

The hotel chain also used the conjoint analysis results to determine the optimal pricing strategy for different room types. By pricing rooms competitively while still offering the amenities that were most important to guests, the hotel chain was able to maximize revenue and occupancy rates.

9.4 Learning from Real-World Applications

These case studies illustrate the practical applications of conjoint analysis and the benefits of using sawtooth probability of choice scores to inform decision-making. By learning from these real-world examples, researchers and businesses can better understand how to apply conjoint analysis to their own situations.

9.5 Expert Insights on Conjoint Applications

These case studies show the power of conjoint analysis. COMPARE.EDU.VN offers expert insights to help you apply these techniques effectively. Contact us at 333 Comparison Plaza, Choice City, CA 90210, United States, or Whatsapp: +1 (626) 555-9090, or visit our website COMPARE.EDU.VN for comprehensive guidance.

10. Frequently Asked Questions (FAQ)

Q1: What is conjoint analysis and how are sawtooth probability of choice scores derived from it?

A1: Conjoint analysis is a market research technique used to determine the value consumers place on different features of a product or service. Sawtooth probability of choice scores are derived from conjoint analysis by asking respondents to make choices between different product configurations. These choices are then used to calculate utility scores, which reflect the preferences of the survey takers.

Q2: Why is data quality so important in conjoint analysis?

A2: Data quality is critical in conjoint analysis because the results are used to make strategic decisions about product development, pricing, and marketing. If the data is of poor quality, the results can be misleading, leading to potentially costly errors.

Q3: What is root likelihood (RLH) and how is it used to identify poor quality survey responses?

A3: Root likelihood (RLH) is a statistical fit metric that indicates the probability that a respondent would have made the selections they did, given their utility scores. It is used to identify poor quality survey responses by comparing the RLH scores of real respondents to those of randomly generated “bots” or simulated respondents who have no preferences.

Q4: How can sawtooth probability of choice scores be standardized for comparison across different studies?

A4: To improve the comparability of sawtooth probability of choice scores across studies, researchers can employ several standardization techniques, such as normalization, z-score transformation, benchmarking, and calibration.

Q5: What factors can affect the comparability of sawtooth probability of choice scores across studies?

A5: Several factors can affect the comparability of sawtooth probability of choice scores across studies, including study design, respondent population, survey instrument, data collection methods, and analytical techniques.

Q6: What are some strategies for mitigating the limitations of sawtooth probability of choice scores?

A6: Strategies for mitigating the limitations of sawtooth probability of choice scores include using multiple methods, considering contextual factors, testing assumptions, ensuring data quality, and seeking expert guidance.

Q7: How can sawtooth probability of choice scores be combined with other data sources to provide a more comprehensive understanding of consumer behavior?

A7: Sawtooth probability of choice scores can be combined with other data sources, such as demographic data, purchase data, social media data, and market research data, to provide a more comprehensive understanding of consumer behavior.

Q8: What are some real-world applications of sawtooth probability of choice scores?

A8: Real-world applications of sawtooth probability of choice scores include optimizing product features in the automotive industry, designing effective communication strategies in healthcare, and optimizing hotel amenities in the hospitality industry.

Q9: What quality control measures can be implemented to ensure data integrity in conjoint studies?

A9: Quality control measures that can be implemented to ensure data integrity in conjoint studies include careful survey design, targeted respondent recruitment, pilot testing, data validation, and data cleaning.

Q10: How does compare.edu.vn assist in ensuring data quality and accurate analysis in conjoint studies?

A10: COMPARE.EDU